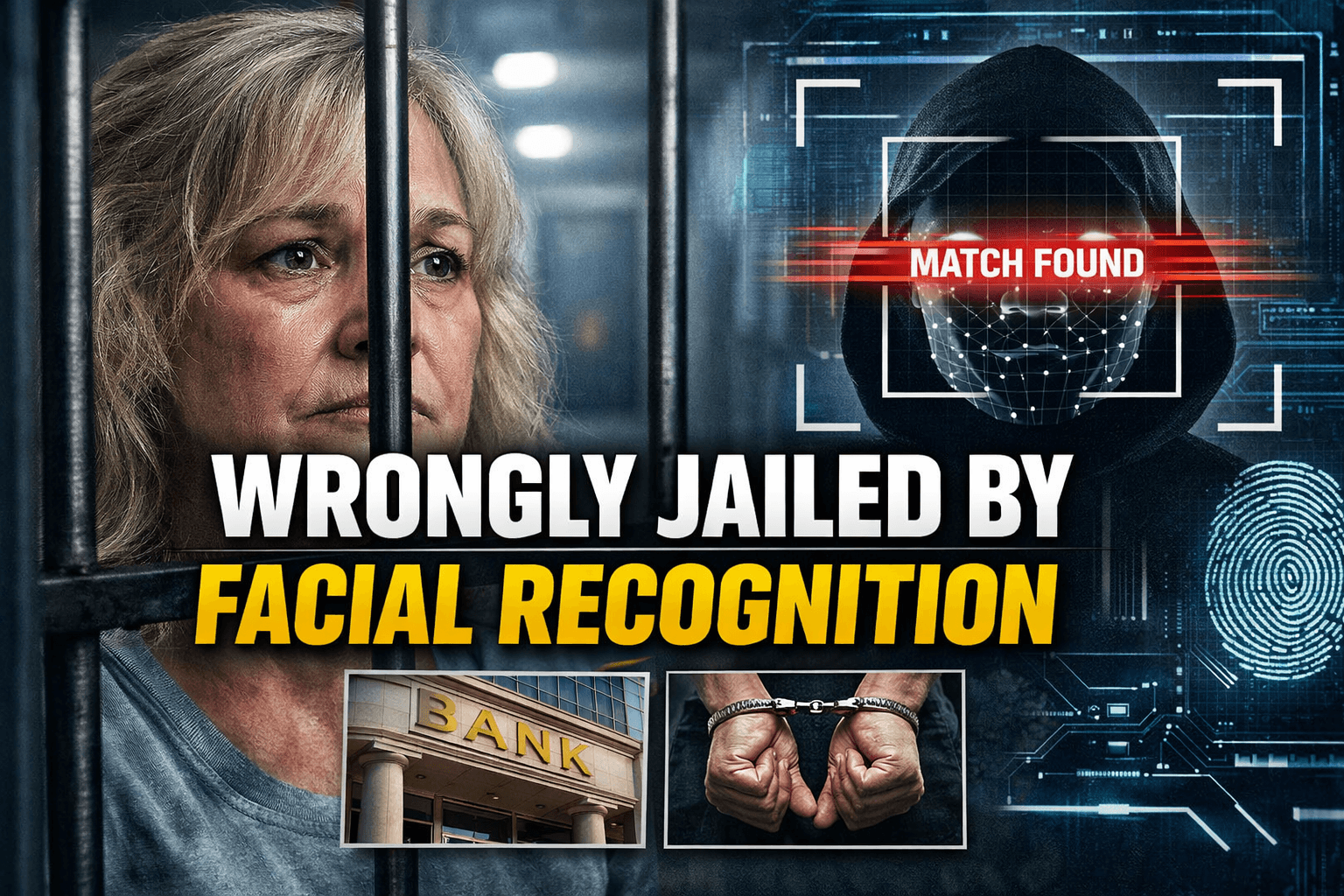

AI Facial Recognition Wrongful Arrest Case Raises Serious Questions About Police Technology and Civil Liberties

The case of Angela Lipps, a Tennessee woman who says she was wrongfully jailed for months after police relied on facial recognition software in a North Dakota bank fraud investigation, has become one of the clearest recent examples of the dangers of using artificial intelligence as a shortcut in criminal investigations. Reports across multiple outlets say Lipps, 50, was arrested in July 2025, held for months, later extradited to North Dakota, and ultimately released after records showed she was in Tennessee when the alleged crimes took place.

The story has drawn national attention because it is not simply about a mistaken arrest. It is about how facial recognition technology, when treated as more than an investigative lead, can lead to devastating real-world consequences. In Lipps’ case, attorneys and media reports say a facial recognition match linked her to surveillance images from a bank fraud case in or near Fargo, North Dakota, even though she says she had never been to the state. The resulting arrest, incarceration, and extradition have intensified scrutiny of AI tools in policing, particularly when police departments rely on them without strong independent verification.

According to reports, Lipps was arrested at her home in Carter County, Tennessee, by U.S. Marshals in July 2025 after a warrant was issued in North Dakota. Several accounts say she was arrested at gunpoint while babysitting children. Her attorneys later argued that the identification was built around facial recognition software and that the evidence should never have been enough, on its own, to support such serious criminal action.

One of the key corrections to make from the earlier draft is this: while many reports identify Clearview AI as the facial recognition tool involved, the strongest verified public reporting available here indicates that the case centered on police use of facial recognition software that produced a false match, and later reporting ties that to Clearview AI specifically. Still, the deeper issue is broader than one brand name. The bigger story is that police appear to have treated an AI-generated facial match as highly persuasive evidence, even though such systems are widely understood to generate possible matches rather than certainties.

Another important correction is about the timeline. Some reports describe Lipps as spending “nearly six months” in jail, while others say “more than five months.” The broad, fact-supported takeaway is that she spent months in custody before the case unraveled. Reports indicate she was arrested in July 2025 and released around late December 2025, after prosecutors dropped the charges once her attorney presented records that contradicted the identification.

Those records appear to have been crucial. Reports say Lipps’ lawyer used bank and purchase records to show that she was in Tennessee at the time the fraud offenses occurred, undermining the North Dakota case against her. That detail is especially striking because it suggests a basic location check may have raised serious doubts much earlier. Instead, the matter escalated to arrest, detention, and extradition.

The legal and human consequences were severe. Media coverage says Lipps lost her home, car, and even her dog during the months she was jailed. Some reports also say she was left in North Dakota after release and had to figure out how to get back home. Her attorneys are reportedly discussing or considering legal action as they gather records from the agencies involved.

This is where the AI facial recognition wrongful arrest case becomes more than an isolated tragedy. It speaks directly to a larger global debate over whether law enforcement agencies have moved too quickly in adopting facial recognition systems without enough safeguards, transparency, or accountability. Facial recognition tools are often marketed as powerful investigative aids, but civil liberties groups and technology critics have long warned that such systems can misidentify innocent people, especially when low-quality surveillance footage is involved or when investigators put too much confidence in algorithmic matches.

The Lipps case also revives an important principle that experts have repeated for years: facial recognition should generate leads, not conclusions. A software match should be the beginning of an investigation, not the end of one. Once a possible match appears, investigators should test it against location records, transaction history, witness accounts, device data, and other forms of corroboration. If those checks had been carried out rigorously and early in this case, the wrongful arrest may never have happened.

Public reaction has been particularly strong because the case appears to combine two growing anxieties at once: fear of overreaching surveillance and fear of uncritical faith in AI. Artificial intelligence often carries an aura of precision and neutrality, but in practice, an algorithm is only as good as the data it analyzes, the conditions under which it is used, and the judgment of the humans interpreting it. When blurred bank or security footage is fed into a system and police treat the output as highly reliable, the risk of catastrophic error rises sharply.

The case has also drawn attention to the agencies involved. Reports say West Fargo Police acknowledged errors, and former or current police leadership discussed changes in review procedures after the incident. One report says the facial recognition tool was used without proper oversight and has since been banned in that agency’s workflow. Another says local authorities reviewed procedures but did not issue a formal apology. Those details matter because they show officials themselves appear to recognize that something went badly wrong in the investigative chain.

Read more on Faster Field Testing for Deadly Cetacean Infections.

From a policy perspective, the AI facial recognition wrongful arrest case raises several big questions. First, what standard of evidence should police meet before seeking an arrest warrant tied to facial recognition? Second, should AI-generated identifications be disclosed explicitly to defense attorneys and courts at the earliest possible stage? Third, should agencies be required to document what independent verification they performed before acting on a facial recognition hit? And finally, who is accountable when a bad AI-assisted identification destroys a person’s life for months?

These questions are becoming more urgent because AI tools are spreading faster than regulatory frameworks. Police departments often adopt emerging technologies in the name of efficiency, but efficiency is not the same as justice. A wrongful arrest can cost someone their home, employment, family stability, reputation, and mental well-being. In that sense, the damage caused by a false facial recognition match is not technical. It is profoundly personal. Lipps’ case illustrates how quickly a digital error can become a human crisis.

The case may also shape future court fights over the admissibility and role of AI tools in criminal investigations. Defense attorneys across the United States are already pushing for more disclosure around algorithmic systems used by police. Cases like this could strengthen arguments that prosecutors and law enforcement agencies should not be allowed to obscure how AI was used to generate a suspect list or probable cause theory. If an algorithm helped put someone in jail, courts may increasingly demand to know how it works, what its error rate is, and what safeguards were or were not followed. That is an inference from the broader legal debate, but it is strongly supported by the public reaction now surrounding this case.

There is also a deeper trust issue at the center of this story. AI systems are often adopted because they promise consistency, speed, and pattern recognition at a scale humans cannot match. But when one of those systems contributes to jailing an innocent person for months, public confidence is shaken not only in the software, but in the institutions using it. For many people, the lesson is simple: if police cannot explain why they trusted the machine more than verifiable records, then the problem is not just the algorithm. It is the system around it.

The corrected takeaway from this story is not that AI is always unreliable or that facial recognition can never help solve crimes. It is that such systems are dangerous when used carelessly, opaquely, or too confidently. In the AI facial recognition wrongful arrest case involving Angela Lipps, the public record as currently reported points to a false facial match, months of incarceration, evidence showing she was elsewhere, and a late collapse of the prosecution after immense harm had already been done.

For police departments, the message is stark: facial recognition must remain a lead-generation tool, subject to rigorous human review and corroboration. For lawmakers, the case underscores the need for clearer rules, disclosure standards, and accountability mechanisms. And for the public, it is a chilling reminder that when AI errors enter the justice system, innocent people can lose months of their lives before the truth catches up.

Conclusion

The AI facial recognition wrongful arrest case involving Angela Lipps is one of the strongest warnings yet about the risks of unchecked police reliance on artificial intelligence. The case is not merely about a software glitch. It is about what happens when technology is trusted without enough skepticism, when basic fact-checking comes too late, and when a human being bears the cost of an algorithmic mistake. As more police departments adopt AI-assisted tools, this case may become a landmark example in the argument for tighter oversight, stronger civil liberties protections, and a far more cautious approach to facial recognition in criminal justice.